总结一下学习人工智能/深度学习过程中个人觉得重要的方法和经验.

主要关于模型.

蛮荒时代

大约2010年开始,那些在计算上看起来不可行的神经网络算法变得热门起来,实际上是以下两点导致的: 其一,随着互联网的公司的出现,为数亿在线用户提供服务,大规模数据集变得触手可及; 另外,廉价又高质量的传感器、廉价的数据存储(克莱德定律)以及廉价计算(摩尔定律)的普及,特别是GPU的普及,使大规模算力唾手可得。

经典CNN模型

LeNet

每个卷积块中的基本单元是一个卷积层、一个sigmoid激活函数和平均汇聚层。请注意,虽然ReLU和最大汇聚层更有效,但它们在20世纪90年代还没有出现。每个卷积层使用5×5卷积核和一个sigmoid激活函数。这些层将输入映射到多个二维特征输出,通常同时增加通道的数量。第一卷积层有6个输出通道,而第二个卷积层有16个输出通道。每个2×2池操作(步幅2)通过空间下采样将维数减少4倍。卷积的输出形状由批量大小、通道数、高度、宽度决定。

1

2

3

4

5

6

7

8

9

10

11

12import torch

from torch import nn

net = nn.Sequential(

nn.Conv2d(1, 6, kernel_size=5, padding=2), nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2),

nn.Conv2d(6, 16, kernel_size=5), nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2),

nn.Flatten(),

nn.Linear(16 * 5 * 5, 120), nn.Sigmoid(),

nn.Linear(120, 84), nn.Sigmoid(),

nn.Linear(84, 10))

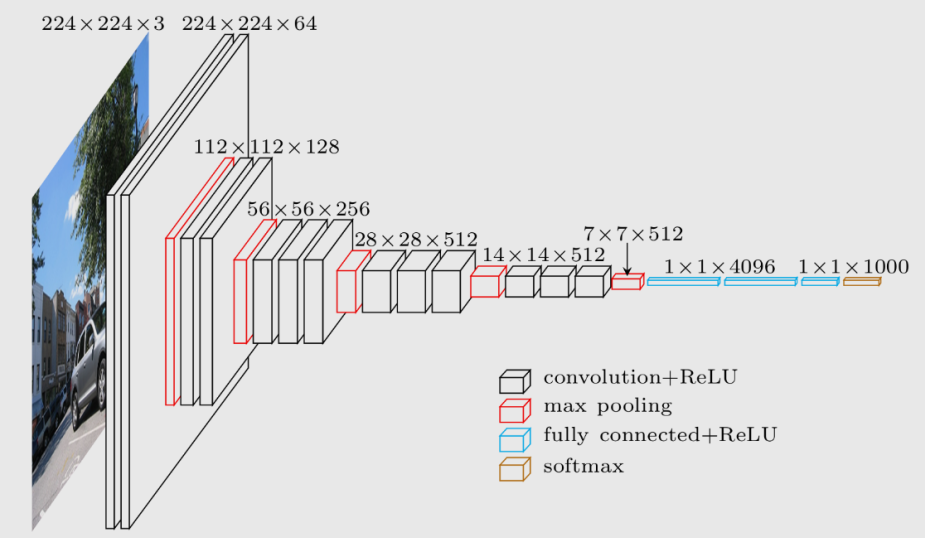

AlexNet

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27import torch

from torch import nn

net = nn.Sequential(

# 这里使用一个11*11的更大窗口来捕捉对象。

# 同时,步幅为4,以减少输出的高度和宽度。

# 另外,输出通道的数目远大于LeNet

nn.Conv2d(1, 96, kernel_size=11, stride=4, padding=1), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2),

# 减小卷积窗口,使用填充为2来使得输入与输出的高和宽一致,且增大输出通道数

nn.Conv2d(96, 256, kernel_size=5, padding=2), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2),

# 使用三个连续的卷积层和较小的卷积窗口。

# 除了最后的卷积层,输出通道的数量进一步增加。

# 在前两个卷积层之后,汇聚层不用于减少输入的高度和宽度

nn.Conv2d(256, 384, kernel_size=3, padding=1), nn.ReLU(),

nn.Conv2d(384, 384, kernel_size=3, padding=1), nn.ReLU(),

nn.Conv2d(384, 256, kernel_size=3, padding=1), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2),

nn.Flatten(),

# 这里,全连接层的输出数量是LeNet中的好几倍。使用dropout层来减轻过拟合

nn.Linear(6400, 4096), nn.ReLU(),

nn.Dropout(p=0.5),

nn.Linear(4096, 4096), nn.ReLU(),

nn.Dropout(p=0.5),

# 最后是输出层。由于这里使用Fashion-MNIST,所以用类别数为10,而非论文中的1000

nn.Linear(4096, 10))

VGG

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16def vgg(conv_arch):

conv_blks = []

in_channels = 1

# 卷积层部分

for (num_convs, out_channels) in conv_arch:

conv_blks.append(vgg_block(num_convs, in_channels, out_channels))

in_channels = out_channels

return nn.Sequential(

*conv_blks, nn.Flatten(),

# 全连接层部分

nn.Linear(out_channels * 7 * 7, 4096), nn.ReLU(), nn.Dropout(0.5),

nn.Linear(4096, 4096), nn.ReLU(), nn.Dropout(0.5),

nn.Linear(4096, 10))

net = vgg(conv_arch)

NiN

NiN和AlexNet之间的一个显著区别是NiN完全取消了全连接层。 相反,NiN使用一个NiN块,其输出通道数等于标签类别的数量。最后放一个全局平均汇聚层(global average pooling layer),生成一个对数几率 (logits)。NiN设计的一个优点是,它显著减少了模型所需参数的数量。然而,在实践中,这种设计有时会增加训练模型的时间1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19def nin_block(in_channels, out_channels, kernel_size, strides, padding):

return nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size, strides, padding),

nn.ReLU(),

nn.Conv2d(out_channels, out_channels, kernel_size=1), nn.ReLU(),

nn.Conv2d(out_channels, out_channels, kernel_size=1), nn.ReLU())

net = nn.Sequential(

nin_block(1, 96, kernel_size=11, strides=4, padding=0),

nn.MaxPool2d(3, stride=2),

nin_block(96, 256, kernel_size=5, strides=1, padding=2),

nn.MaxPool2d(3, stride=2),

nin_block(256, 384, kernel_size=3, strides=1, padding=1),

nn.MaxPool2d(3, stride=2),

nn.Dropout(0.5),

# 标签类别数是10

nin_block(384, 10, kernel_size=3, strides=1, padding=1),

nn.AdaptiveAvgPool2d((1, 1)),

# 将四维的输出转成二维的输出,其形状为(批量大小,10)

nn.Flatten())

GoogleNet

在GoogLeNet中,基本的卷积块被称为Inception块(Inception block)1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29import torch

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l

class Inception(nn.Module):

# c1--c4是每条路径的输出通道数

def __init__(self, in_channels, c1, c2, c3, c4, **kwargs):

super(Inception, self).__init__(**kwargs)

# 线路1,单1x1卷积层

self.p1_1 = nn.Conv2d(in_channels, c1, kernel_size=1)

# 线路2,1x1卷积层后接3x3卷积层

self.p2_1 = nn.Conv2d(in_channels, c2[0], kernel_size=1)

self.p2_2 = nn.Conv2d(c2[0], c2[1], kernel_size=3, padding=1)

# 线路3,1x1卷积层后接5x5卷积层

self.p3_1 = nn.Conv2d(in_channels, c3[0], kernel_size=1)

self.p3_2 = nn.Conv2d(c3[0], c3[1], kernel_size=5, padding=2)

# 线路4,3x3最大汇聚层后接1x1卷积层

self.p4_1 = nn.MaxPool2d(kernel_size=3, stride=1, padding=1)

self.p4_2 = nn.Conv2d(in_channels, c4, kernel_size=1)

def forward(self, x):

p1 = F.relu(self.p1_1(x))

p2 = F.relu(self.p2_2(F.relu(self.p2_1(x))))

p3 = F.relu(self.p3_2(F.relu(self.p3_1(x))))

p4 = F.relu(self.p4_2(self.p4_1(x)))

# 在通道维度上连结输出

return torch.cat((p1, p2, p3, p4), dim=1)

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26b1 = nn.Sequential(nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),

nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

b2 = nn.Sequential(nn.Conv2d(64, 64, kernel_size=1),

nn.ReLU(),

nn.Conv2d(64, 192, kernel_size=3, padding=1),

nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

b3 = nn.Sequential(Inception(192, 64, (96, 128), (16, 32), 32),

Inception(256, 128, (128, 192), (32, 96), 64),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

b4 = nn.Sequential(Inception(480, 192, (96, 208), (16, 48), 64),

Inception(512, 160, (112, 224), (24, 64), 64),

Inception(512, 128, (128, 256), (24, 64), 64),

Inception(512, 112, (144, 288), (32, 64), 64),

Inception(528, 256, (160, 320), (32, 128), 128),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

b5 = nn.Sequential(Inception(832, 256, (160, 320), (32, 128), 128),

Inception(832, 384, (192, 384), (48, 128), 128),

nn.AdaptiveAvgPool2d((1,1)),

nn.Flatten())

net = nn.Sequential(b1, b2, b3, b4, b5, nn.Linear(1024, 10)) X = torch.rand(size=(1, 1, 96, 96))

for layer in net:

X = layer(X)

print(layer.__class__.__name__,'output shape:\t', X.shape)

ResNet

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29import torch

from torch import nn

from torch.nn import functional as F

class Residual(nn.Module): #@save

def __init__(self, input_channels, num_channels,

use_1x1conv=False, strides=1):

super().__init__()

self.conv1 = nn.Conv2d(input_channels, num_channels,

kernel_size=3, padding=1, stride=strides)

self.conv2 = nn.Conv2d(num_channels, num_channels,

kernel_size=3, padding=1)

if use_1x1conv:

self.conv3 = nn.Conv2d(input_channels, num_channels,

kernel_size=1, stride=strides)

else:

self.conv3 = None

self.bn1 = nn.BatchNorm2d(num_channels)

self.bn2 = nn.BatchNorm2d(num_channels)

def forward(self, X):

Y = F.relu(self.bn1(self.conv1(X)))

Y = self.bn2(self.conv2(Y))

if self.conv3:

X = self.conv3(X)

Y += X

return F.relu(Y)

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25b1 = nn.Sequential(nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

def resnet_block(input_channels, num_channels, num_residuals,

first_block=False):

blk = []

for i in range(num_residuals):

if i == 0 and not first_block:

blk.append(Residual(input_channels, num_channels,

use_1x1conv=True, strides=2))

else:

blk.append(Residual(num_channels, num_channels))

return blk

b2 = nn.Sequential(*resnet_block(64, 64, 2, first_block=True))

b3 = nn.Sequential(*resnet_block(64, 128, 2))

b4 = nn.Sequential(*resnet_block(128, 256, 2))

b5 = nn.Sequential(*resnet_block(256, 512, 2))

net = nn.Sequential(b1, b2, b3, b4, b5,

nn.AdaptiveAvgPool2d((1,1)),

nn.Flatten(), nn.Linear(512, 10))

X = torch.rand(size=(1, 1, 224, 224))

for layer in net:

X = layer(X)

print(layer.__class__.__name__,'output shape:\t', X.shape)

DenseNet

1

2

3

4

5

6

7import torch

from torch import nn

def conv_block(input_channels, num_channels):

return nn.Sequential(

nn.BatchNorm2d(input_channels), nn.ReLU(),

nn.Conv2d(input_channels, num_channels, kernel_size=3, padding=1))1

2

3

4

5

6

7

8

9

10

11

12

13

14

15class DenseBlock(nn.Module):

def __init__(self, num_convs, input_channels, num_channels):

super(DenseBlock, self).__init__()

layer = []

for i in range(num_convs):

layer.append(conv_block(

num_channels * i + input_channels, num_channels))

self.net = nn.Sequential(*layer)

def forward(self, X):

for blk in self.net:

Y = blk(X)

# 连接通道维度上每个块的输入和输出

X = torch.cat((X, Y), dim=1)

return X

稠密网络主要由2部分构成:稠密块(dense block)和过渡层(transition layer)

1

2

3

4blk = DenseBlock(2, 3, 10)

X = torch.randn(4, 3, 8, 8)

Y = blk(X)

Y.shape

由于每个稠密块都会带来通道数的增加,使用过多则会过于复杂化模型。 而过渡层可以用来控制模型复杂度。 它通过1×1卷积层来减小通道数,并使用步幅为2的平均汇聚层减半高和宽,从而进一步降低模型复杂度。1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28def transition_block(input_channels, num_channels):

return nn.Sequential(

nn.BatchNorm2d(input_channels), nn.ReLU(),

nn.Conv2d(input_channels, num_channels, kernel_size=1),

nn.AvgPool2d(kernel_size=2, stride=2))

blk = transition_block(23, 10)

b1 = nn.Sequential(

nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

# num_channels为当前的通道数

num_channels, growth_rate = 64, 32

num_convs_in_dense_blocks = [4, 4, 4, 4]

blks = []

for i, num_convs in enumerate(num_convs_in_dense_blocks):

blks.append(DenseBlock(num_convs, num_channels, growth_rate))

# 上一个稠密块的输出通道数

num_channels += num_convs * growth_rate

# 在稠密块之间添加一个转换层,使通道数量减半

if i != len(num_convs_in_dense_blocks) - 1:

blks.append(transition_block(num_channels, num_channels // 2))

num_channels = num_channels // 2

net = nn.Sequential(

b1, *blks,

nn.BatchNorm2d(num_channels), nn.ReLU(),

nn.AdaptiveAvgPool2d((1, 1)),

nn.Flatten(),

nn.Linear(num_channels, 10))

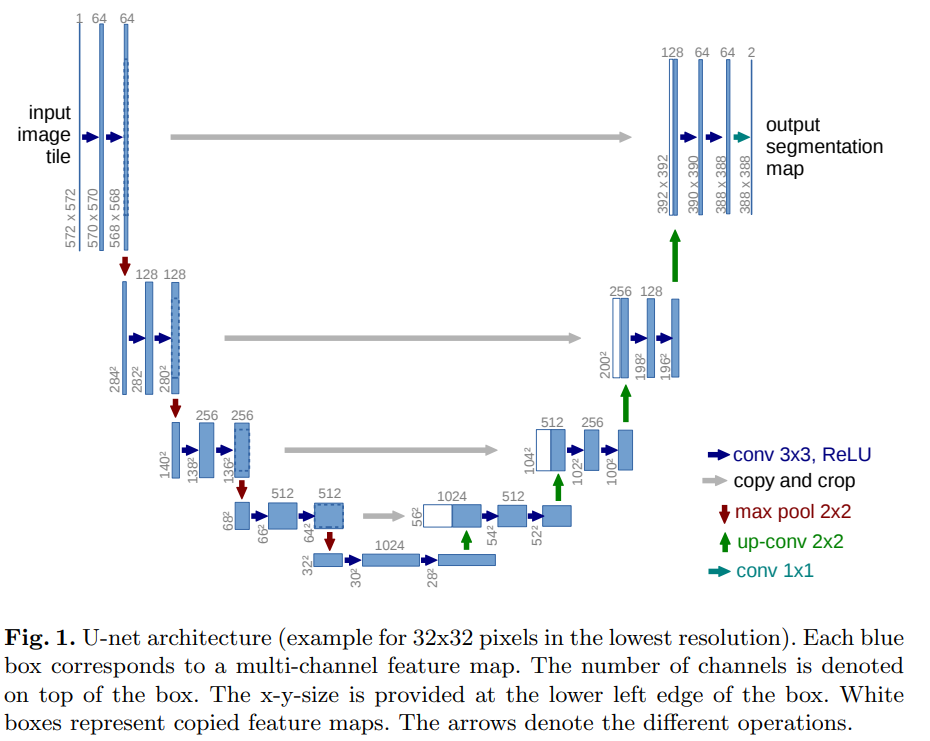

U-Net

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108""" Parts of the U-Net model """

import torch

import torch.nn as nn

import torch.nn.functional as F

class DoubleConv(nn.Module):

"""(convolution => [BN] => ReLU) * 2"""

def __init__(self, in_channels, out_channels, mid_channels=None):

super().__init__()

if not mid_channels:

mid_channels = out_channels

self.double_conv = nn.Sequential(

nn.Conv2d(in_channels, mid_channels, kernel_size=3, padding=1, bias=False),

nn.BatchNorm2d(mid_channels),

nn.ReLU(inplace=True),

nn.Conv2d(mid_channels, out_channels, kernel_size=3, padding=1, bias=False),

nn.BatchNorm2d(out_channels),

nn.ReLU(inplace=True)

)

def forward(self, x):

return self.double_conv(x)

class Down(nn.Module):

"""Downscaling with maxpool then double conv"""

def __init__(self, in_channels, out_channels):

super().__init__()

self.maxpool_conv = nn.Sequential(

nn.MaxPool2d(2),

DoubleConv(in_channels, out_channels)

)

def forward(self, x):

return self.maxpool_conv(x)

class Up(nn.Module):

"""Upscaling then double conv"""

def __init__(self, in_channels, out_channels, bilinear=True):

super().__init__()

# if bilinear, use the normal convolutions to reduce the number of channels

if bilinear:

self.up = nn.Upsample(scale_factor=2, mode='bilinear', align_corners=True)

self.conv = DoubleConv(in_channels, out_channels, in_channels // 2)

else:

self.up = nn.ConvTranspose2d(in_channels, in_channels // 2, kernel_size=2, stride=2)

self.conv = DoubleConv(in_channels, out_channels)

def forward(self, x1, x2):

x1 = self.up(x1)

# input is CHW

diffY = x2.size()[2] - x1.size()[2]

diffX = x2.size()[3] - x1.size()[3]

x1 = F.pad(x1, [diffX // 2, diffX - diffX // 2,

diffY // 2, diffY - diffY // 2])

x = torch.cat([x2, x1], dim=1)

return self.conv(x)

class OutConv(nn.Module):

def __init__(self, in_channels, out_channels):

super(OutConv, self).__init__()

self.conv = nn.Conv2d(in_channels, out_channels, kernel_size=1)

def forward(self, x):

return self.conv(x)

class UNet(nn.Module):

def __init__(self, n_channels, n_classes, bilinear=False):

super(UNet, self).__init__()

self.n_channels = n_channels

self.n_classes = n_classes

self.bilinear = bilinear

self.inc = (DoubleConv(n_channels, 64))

self.down1 = (Down(64, 128))

self.down2 = (Down(128, 256))

self.down3 = (Down(256, 512))

factor = 2 if bilinear else 1

self.down4 = (Down(512, 1024 // factor))

self.up1 = (Up(1024, 512 // factor, bilinear))

self.up2 = (Up(512, 256 // factor, bilinear))

self.up3 = (Up(256, 128 // factor, bilinear))

self.up4 = (Up(128, 64, bilinear))

self.outc = (OutConv(64, n_classes))

def forward(self, x):

x1 = self.inc(x)

x2 = self.down1(x1)

x3 = self.down2(x2)

x4 = self.down3(x3)

x5 = self.down4(x4)

x = self.up1(x5, x4)

x = self.up2(x, x3)

x = self.up3(x, x2)

x = self.up4(x, x1)

logits = self.outc(x)

return logits

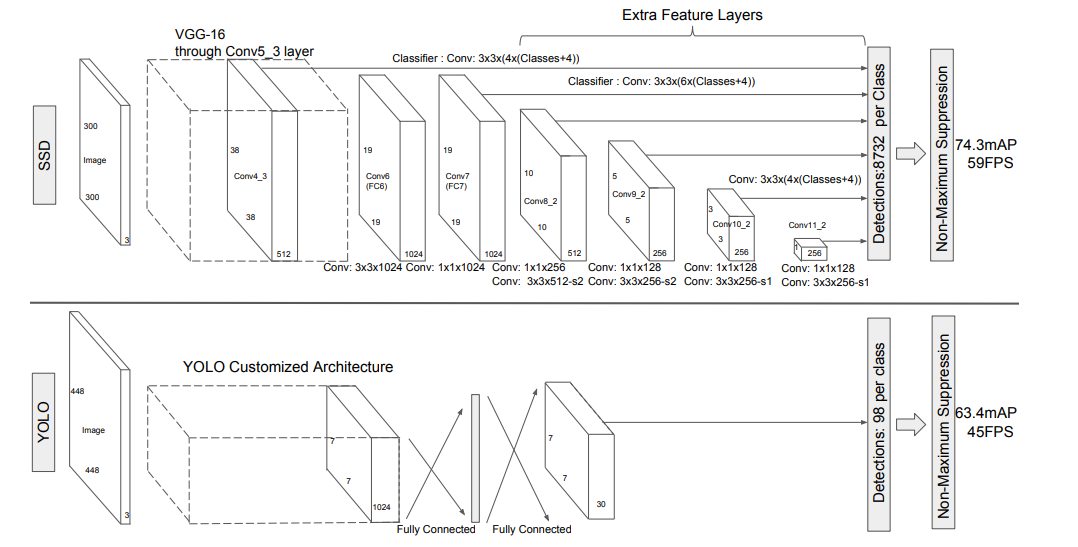

SSD

13.7. 单发多框检测(SSD) — 动手学深度学习 2.0.0 documentation (d2l.ai)1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50# #!/usr/bin/env python

# -*- coding:utf-8 -*-

# Copyleft (C) 2024 proanimer, Inc. All Rights Reserved

# author:proanimer

# createTime:2024/6/12 下午10:42

# lastModifiedTime:2024/6/12 下午10:42

# file:SSD.py

# software: classicNets

#

import torch

import torch.nn as nn

from torchvision.models import vgg19

class SSD(nn.Module):

def __init__(self):

super().__init__()

vgg = vgg19(pretrained=True)

vgg.eval()

self.conv = vgg.features

self.conv1 = nn.Conv2d(512, 1024, 3)

self.conv2 = nn.Conv2d(1024, 1024, 1)

self.conv3 = nn.Sequential(

nn.Conv2d(1024, 256, 1),

nn.Conv2d(256, 512, 3)

)

self.conv4 = nn.Sequential(

nn.Conv2d(512, 128, 1),

nn.Conv2d(128, 256, 3)

)

self.conv5 = nn.Sequential(

nn.Conv2d(256, 128, 1),

nn.Conv2d(128, 256, 3)

)

self.conv6 = nn.Sequential(

nn.Conv2d(256, 128, 1),

nn.Conv2d(128, 256, 3)

)

def forward(self, x):

feat_1 = self.conv(x)

feat = self.conv1(feat_1)

feat_2 = self.conv2(feat)

feat_3 = self.conv3(feat_2)

feat_4 = self.conv4(feat_3)

feat_5 = self.conv5(feat_4)

feat_6 = self.conv6(feat_5)

return feat_1, feat_2, feat_3, feat_4, feat_5, feat_6

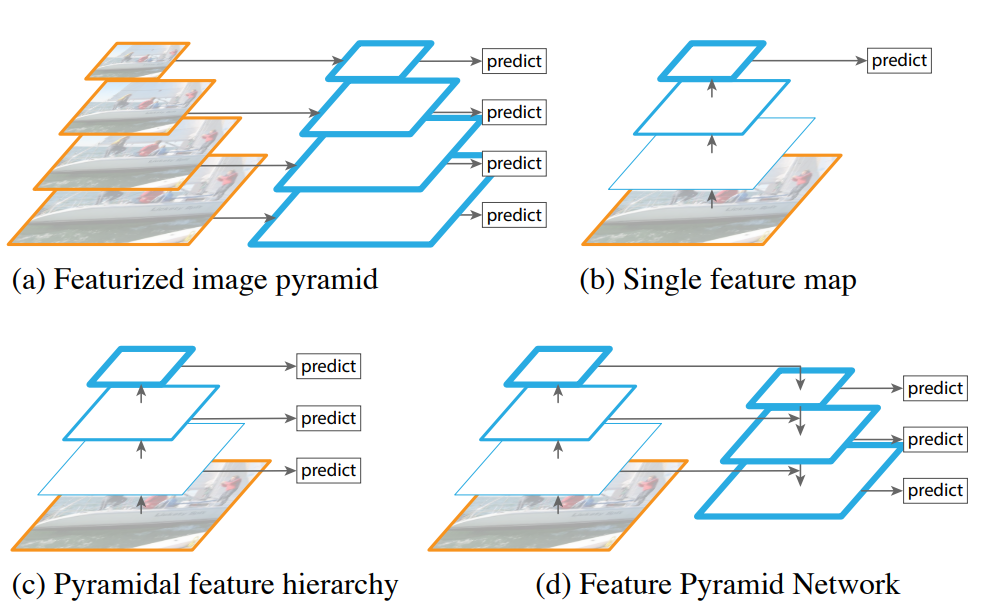

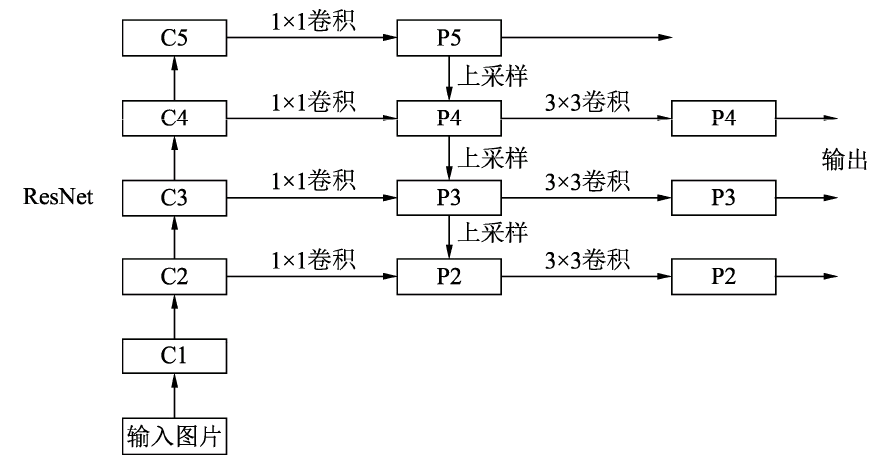

Feature Pyramid Networks for Object Detection

最后会将不同尺度得到的结果1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109# #!/usr/bin/env python

# -*- coding:utf-8 -*-

# Copyleft (C) 2024 proanimer, Inc. All Rights Reserved

# author:proanimer

# createTime:2024/6/12 下午9:13

# lastModifiedTime:2024/6/12 下午9:13

# file:fpn.py

# software: classicNets

#

import torch.nn as nn

import torch.nn.functional as F

import math

##先定义ResNet基本类,或者可以说ResNet的基本砖块

class Bottleneck(nn.Module):

expansion = 4 ##通道倍增数

def __init__(self, in_planes, planes, stride=1, downsample=None):

super(Bottleneck, self).__init__()

self.bottleneck = nn.Sequential(

nn.Conv2d(in_planes, planes, 1, bias=False),

nn.BatchNorm2d(planes),

nn.ReLU(inplace=True),

nn.Conv2d(planes, planes, 3, stride, 1, bias=False),

nn.BatchNorm2d(planes),

nn.ReLU(inplace=True),

nn.Conv2d(planes, self.expansion * planes, 1, bias=False),

nn.BatchNorm2d(self.expansion * planes),

)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

def forward(self, x):

identity = x

out = self.bottleneck(x)

if self.downsample is not None:

identity = self.downsample(x)

out += identity

out = self.relu(out)

return out

##FPN类

class FPN(nn.Module):

def __init__(self, layers):

super(FPN, self).__init__()

self.inplanes = 64

###下面四句代码代表处理输入的C1模块--对应博客中的图

self.conv1 = nn.Conv2d(3, 64, 7, 2, 3, bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(3, 2, 1)

###搭建自下而上的C2,C3,C4,C5

self.layer1 = self._make_layer(64, layers[0])

self.layer2 = self._make_layer(128, layers[1], 2)

self.layer3 = self._make_layer(256, layers[2], 2)

self.layer4 = self._make_layer(512, layers[3], 2)

###定义toplayer层,对C5减少通道数,得到P5

self.toplayer = nn.Conv2d(2048, 256, 1, 1, 0)

###代表3*3的卷积融合,目的是消除上采样过程带来的重叠效应,以生成最终的特征图。

self.smooth1 = nn.Conv2d(256, 256, 3, 1, 1)

self.smooth2 = nn.Conv2d(256, 256, 3, 1, 1)

self.smooth3 = nn.Conv2d(256, 256, 3, 1, 1)

###横向连接,保证通道数目相同

self.latlayer1 = nn.Conv2d(1024, 256, 1, 1, 0)

self.latlayer2 = nn.Conv2d(512, 256, 1, 1, 0)

self.latlayer3 = nn.Conv2d(256, 256, 1, 1, 0)

##作用:构建C2-C5砖块,注意stride为1和2的区别:得到C2没有经历下采样

def _make_layer(self, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != Bottleneck.expansion * planes:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, Bottleneck.expansion * planes, 1, stride, bias=False),

nn.BatchNorm2d(Bottleneck.expansion * planes)

)

###初始化需要一个list,代表左侧网络ResNet每一个阶段的Bottleneck的数量

layers = []

layers.append(Bottleneck(self.inplanes, planes, stride, downsample))

self.inplanes = planes * Bottleneck.expansion

for i in range(1, blocks):

layers.append(Bottleneck(self.inplanes, planes))

return nn.Sequential(*layers)

###自上而下的上采样模块

def _upsample_add(self, x, y):

_, _, H, W = y.shape

return F.upsample(x, size=(H, W), mode='bilinear') + y

def forward(self, x):

###自下而上

c1 = self.maxpool(self.relu(self.bn1(self.conv1(x))))

c2 = self.layer1(c1)

c3 = self.layer2(c2)

c4 = self.layer3(c3)

c5 = self.layer4(c4)

###自上而下

p5 = self.toplayer(c5)

p4 = self._upsample_add(p5, self.latlayer1(c4))

p3 = self._upsample_add(p4, self.latlayer2(c3))

p2 = self._upsample_add(p3, self.latlayer3(c2))

###卷积融合,平滑处理

p4 = self.smooth1(p4)

p3 = self.smooth2(p3)

p2 = self.smooth3(p2)

return p2, p3, p4, p5

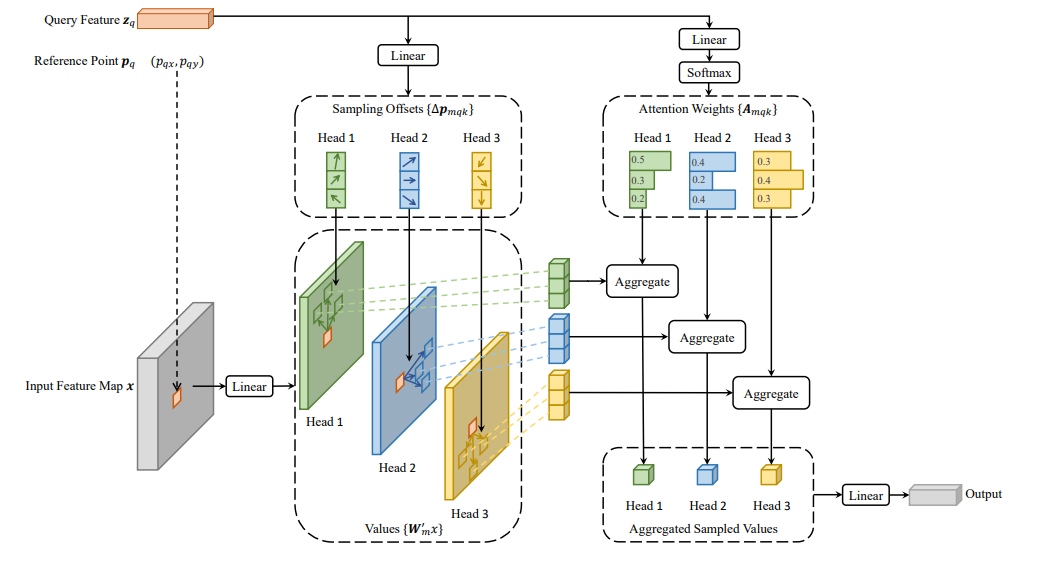

Deformable Conv

可变形的卷积,还有可变形的attention. 即插即用类型.1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147class DeformConv2d(nn.Module):

def __init__(self, inc, outc, kernel_size=3, padding=1, stride=1, bias=None, modulation=False):

"""

Args:

modulation (bool, optional): If True, Modulated Defomable Convolution (Deformable ConvNets v2).

"""

super(DeformConv2d, self).__init__()

self.kernel_size = kernel_size

self.padding = padding

self.stride = stride

self.zero_padding = nn.ZeroPad2d(padding)

# conv则是实际进行的卷积操作,注意这里步长设置为卷积核大小,因为与该卷积核进行卷积操作的特征图是由输出特征图中每个点扩展为其对应卷积核那么多个点后生成的。

self.conv = nn.Conv2d(inc, outc, kernel_size=kernel_size, stride=kernel_size, bias=bias)

# p_conv是生成offsets所使用的卷积,输出通道数为卷积核尺寸的平方的2倍,代表对应卷积核每个位置横纵坐标都有偏移量。

self.p_conv = nn.Conv2d(inc, 2*kernel_size*kernel_size, kernel_size=3, padding=1, stride=stride)

nn.init.constant_(self.p_conv.weight, 0)

self.p_conv.register_backward_hook(self._set_lr)

self.modulation = modulation # modulation是可选参数,若设置为True,那么在进行卷积操作时,对应卷积核的每个位置都会分配一个权重。

if modulation:

self.m_conv = nn.Conv2d(inc, kernel_size*kernel_size, kernel_size=3, padding=1, stride=stride)

nn.init.constant_(self.m_conv.weight, 0)

self.m_conv.register_backward_hook(self._set_lr)

def _set_lr(module, grad_input, grad_output):

grad_input = (grad_input[i] * 0.1 for i in range(len(grad_input)))

grad_output = (grad_output[i] * 0.1 for i in range(len(grad_output)))

def forward(self, x):

offset = self.p_conv(x)

if self.modulation:

m = torch.sigmoid(self.m_conv(x))

dtype = offset.data.type()

ks = self.kernel_size

N = offset.size(1) // 2

if self.padding:

x = self.zero_padding(x)

# (b, 2N, h, w)

p = self._get_p(offset, dtype)

# (b, h, w, 2N)

p = p.contiguous().permute(0, 2, 3, 1)

q_lt = p.detach().floor()

q_rb = q_lt + 1

q_lt = torch.cat([torch.clamp(q_lt[..., :N], 0, x.size(2)-1), torch.clamp(q_lt[..., N:], 0, x.size(3)-1)], dim=-1).long()

q_rb = torch.cat([torch.clamp(q_rb[..., :N], 0, x.size(2)-1), torch.clamp(q_rb[..., N:], 0, x.size(3)-1)], dim=-1).long()

q_lb = torch.cat([q_lt[..., :N], q_rb[..., N:]], dim=-1)

q_rt = torch.cat([q_rb[..., :N], q_lt[..., N:]], dim=-1)

# clip p

p = torch.cat([torch.clamp(p[..., :N], 0, x.size(2)-1), torch.clamp(p[..., N:], 0, x.size(3)-1)], dim=-1)

# bilinear kernel (b, h, w, N)

g_lt = (1 + (q_lt[..., :N].type_as(p) - p[..., :N])) * (1 + (q_lt[..., N:].type_as(p) - p[..., N:]))

g_rb = (1 - (q_rb[..., :N].type_as(p) - p[..., :N])) * (1 - (q_rb[..., N:].type_as(p) - p[..., N:]))

g_lb = (1 + (q_lb[..., :N].type_as(p) - p[..., :N])) * (1 - (q_lb[..., N:].type_as(p) - p[..., N:]))

g_rt = (1 - (q_rt[..., :N].type_as(p) - p[..., :N])) * (1 + (q_rt[..., N:].type_as(p) - p[..., N:]))

# (b, c, h, w, N)

x_q_lt = self._get_x_q(x, q_lt, N)

x_q_rb = self._get_x_q(x, q_rb, N)

x_q_lb = self._get_x_q(x, q_lb, N)

x_q_rt = self._get_x_q(x, q_rt, N)

# (b, c, h, w, N)

x_offset = g_lt.unsqueeze(dim=1) * x_q_lt + \

g_rb.unsqueeze(dim=1) * x_q_rb + \

g_lb.unsqueeze(dim=1) * x_q_lb + \

g_rt.unsqueeze(dim=1) * x_q_rt

# modulation

if self.modulation:

m = m.contiguous().permute(0, 2, 3, 1)

m = m.unsqueeze(dim=1)

m = torch.cat([m for _ in range(x_offset.size(1))], dim=1)

x_offset *= m

x_offset = self._reshape_x_offset(x_offset, ks)

out = self.conv(x_offset)

return out

def _get_p_n(self, N, dtype):

# 由于卷积核中心点位置是其尺寸的一半,于是中心点向左(上)方向移动尺寸的一半就得到起始点,向右(下)方向移动另一半就得到终止点

p_n_x, p_n_y = torch.meshgrid(

torch.arange(-(self.kernel_size-1)//2, (self.kernel_size-1)//2+1),

torch.arange(-(self.kernel_size-1)//2, (self.kernel_size-1)//2+1))

# (2N, 1)

p_n = torch.cat([torch.flatten(p_n_x), torch.flatten(p_n_y)], 0)

p_n = p_n.view(1, 2*N, 1, 1).type(dtype)

return p_n

def _get_p_0(self, h, w, N, dtype):

# p0_y、p0_x就是输出特征图每点映射到输入特征图上的纵、横坐标值。

p_0_x, p_0_y = torch.meshgrid(

torch.arange(1, h*self.stride+1, self.stride),

torch.arange(1, w*self.stride+1, self.stride))

p_0_x = torch.flatten(p_0_x).view(1, 1, h, w).repeat(1, N, 1, 1)

p_0_y = torch.flatten(p_0_y).view(1, 1, h, w).repeat(1, N, 1, 1)

p_0 = torch.cat([p_0_x, p_0_y], 1).type(dtype)

return p_0

# 输出特征图上每点(对应卷积核中心)加上其对应卷积核每个位置的相对(横、纵)坐标后再加上自学习的(横、纵坐标)偏移量。

# p0就是将输出特征图每点对应到卷积核中心,然后映射到输入特征图中的位置;

# pn则是p0对应卷积核每个位置的相对坐标;

def _get_p(self, offset, dtype):

N, h, w = offset.size(1)//2, offset.size(2), offset.size(3)

# (1, 2N, 1, 1)

p_n = self._get_p_n(N, dtype)

# (1, 2N, h, w)

p_0 = self._get_p_0(h, w, N, dtype)

p = p_0 + p_n + offset

return p

def _get_x_q(self, x, q, N):

# 计算双线性插值点的4邻域点对应的权重

b, h, w, _ = q.size()

padded_w = x.size(3)

c = x.size(1)

# (b, c, h*w)

x = x.contiguous().view(b, c, -1)

# (b, h, w, N)

index = q[..., :N]*padded_w + q[..., N:] # offset_x*w + offset_y

# (b, c, h*w*N)

index = index.contiguous().unsqueeze(dim=1).expand(-1, c, -1, -1, -1).contiguous().view(b, c, -1)

x_offset = x.gather(dim=-1, index=index).contiguous().view(b, c, h, w, N)

return x_offset

def _reshape_x_offset(x_offset, ks):

b, c, h, w, N = x_offset.size()

x_offset = torch.cat([x_offset[..., s:s+ks].contiguous().view(b, c, h, w*ks) for s in range(0, N, ks)], dim=-1)

x_offset = x_offset.contiguous().view(b, c, h*ks, w*ks)

return x_offset

这些模型中NiN的1x1conv以及ResetNet的残差作为后面更复杂模型常用的方法,比如U-Net.而UNet,FPN这样的多尺度和残差连接又在许多目标检测等任务中使用.

RNN GRU LSTM

黑夜前的光明

在transformer之前,我们认为泡沫即将吹破

李开复说2018年是AI泡沫破裂之年 LeCun点赞 - 李开复 - IT业界 - 深度开源 (open-open.com)

Attention

Transformer

The Annotated Transformer (harvard.edu)

从宏观角度来看,Transformer的编码器是由多个相同的层叠加而成的,每个层都有两个子层(子层表示为sublayer)。第一个子层是多头自注意力(multi-head self-attention)汇聚;第二个子层是基于位置的前馈网络(positionwise feed-forward network)。具体来说,在计算编码器的自注意力时,查询、键和值都来自前一个编码器层的输出。受中残差网络的启发,每个子层都采用了残差连接(residual connection)。在Transformer中,对于序列中任何位置的任何输入𝑥∈𝑅𝑑,都要求满足sublayer(𝑥)∈𝑅𝑑,以便残差连接满足𝑥+sublayer(𝑥)∈𝑅𝑑。在残差连接的加法计算之后,紧接着应用层规范化(layer normalization)

因此,输入序列对应的每个位置,Transformer编码器都将输出一个𝑑维表示向量。

Transformer解码器也是由多个相同的层叠加而成的,并且层中使用了残差连接和层规范化。除了编码器中描述的两个子层之外,解码器还在这两个子层之间插入了第三个子层,称为编码器-解码器注意力(encoder-decoder attention)层。在编码器-解码器注意力中,查询来自前一个解码器层的输出,而键和值来自整个编码器的输出。在解码器自注意力中,查询、键和值都来自上一个解码器层的输出。但是,解码器中的每个位置只能考虑该位置之前的所有位置。这种掩蔽(masked)注意力保留了自回归(auto-regressive)属性,确保预测仅依赖于已生成的输出词元。

transformer目前是通吃的,在cv,nlp,speech等领域的各种任务上都有实践

下面是使用transformer的通用目标检测方法

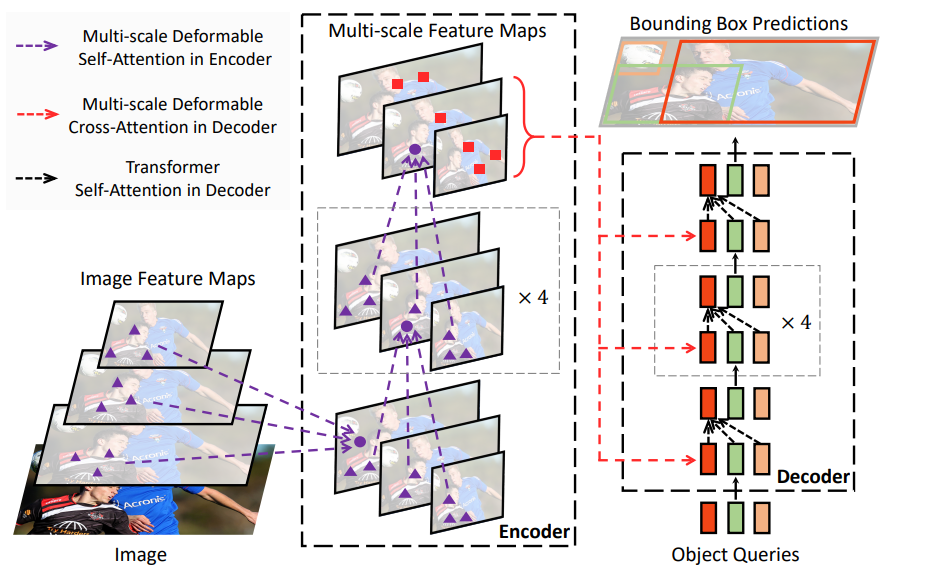

DETR

与传统的计算机视觉技术不同,DETR 将物体检测作为一个直接的集合预测问题(a direct set prediction problem)来处理。它由一个基于集合的全局损失和一个变换器编码器-解码器架构组成,前者通过两端匹配强制进行唯一预测。

给定一个固定的小范围已学对象查询集,DETR 会对对象关系和全局图像上下文进行推理,从而直接并行输出最终的预测集。由于这种并行性,DETR 非常快速高效。

detr_demo.ipynb - Colab (google.com)

Deformable DETR

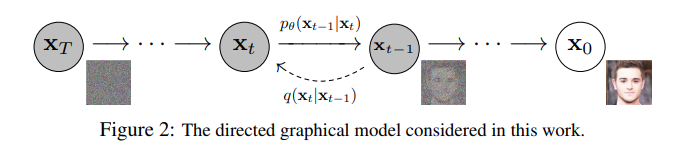

Diffusion Models

在VAE,GAN之后的生成式之光.

具体代码参看The Annotated Diffusion Model (huggingface.co)

- Diffusion Models as a kind of VAE | Angus Turner

- yangqy1110/Diffusion-Models: 扩散模型原理和pytorch代码实现初学资料汇总 (github.com)

- mikonvergence/DiffusionFastForward: DiffusionFastForward: a free course and experimental framework for diffusion-based generative models (github.com)

- diff-usion/Awesome-Diffusion-Models: A collection of resources and papers on Diffusion Models (github.com)

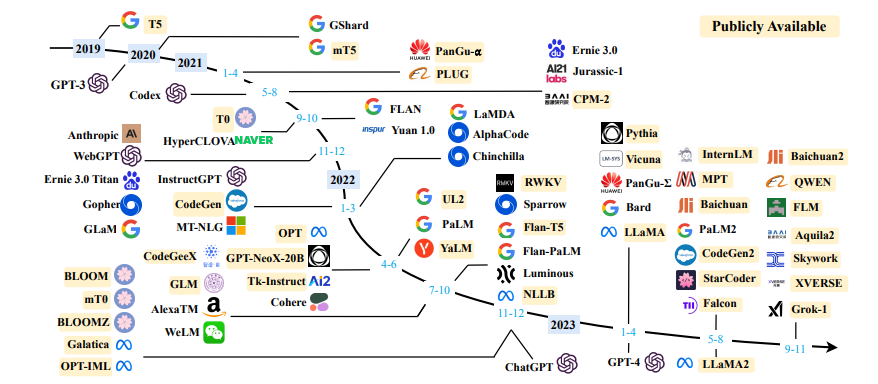

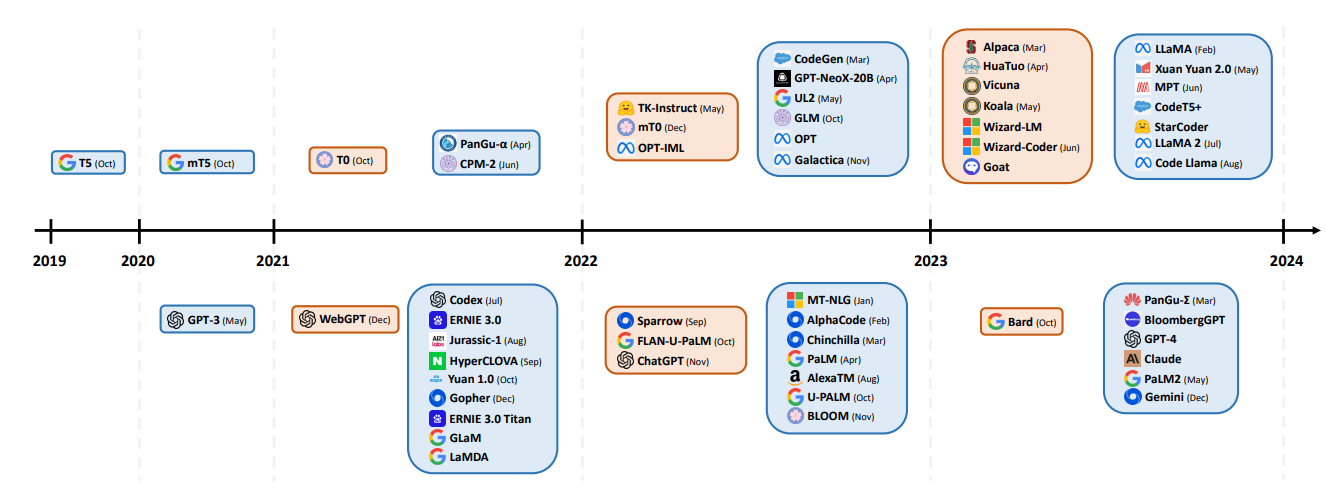

现代大模型

目前,它是正处于统治地位. 当然,人们也希望有其他方法.

LLM

可以考虑参考Andrej Karpathy的nanoGPT以及GPT2复现.

参考资料

学习PytorchZero to Mastery Learn PyTorch for Deep Learning

学习经典《动手学深度学习》 — 动手学深度学习 2.0.0 documentation (d2l.ai)

学习attention与transformer

- The Annotated Transformer (harvard.edu)

- xmu-xiaoma666/External-Attention-pytorch: 🍀 Pytorch implementation of various Attention Mechanisms, MLP, Re-parameter, Convolution, which is helpful to further understand papers.⭐⭐⭐ (github.com)

学习llm

Let’s build GPT: from scratch, in code, spelled out. (youtube.com)

naklecha/llama3-from-scratch: llama3 implementation one matrix multiplication at a time (github.com)

- Introduction - The Large Language Model Playbook (cyrilzakka.github.io)

学习扩散模型和大模型的个人博客